Cloud Backup for Linux

I routinely do backups on external hard drives using backintime. It is a tool which uses hard links to create snapshots with deduplication. Restoring is super easy, either use the tool or copy files manually. The directory hierarchy had a directory with time as filename and your whole directory tree below that.

As I only do these backups every couple of weeks, there is a gap of time during which I could lose data. Also on the go I want to have backups. My PhD thesis draft is in a private GitHub repository, so I can just push to that and have a backup in the Microsoft cloud somewhere. For everything else I need something else, so I started researching this a couple years ago. Backblaze offers a software client and unlimited cloud space for 5 USD/month, but just for Windows and macOS.

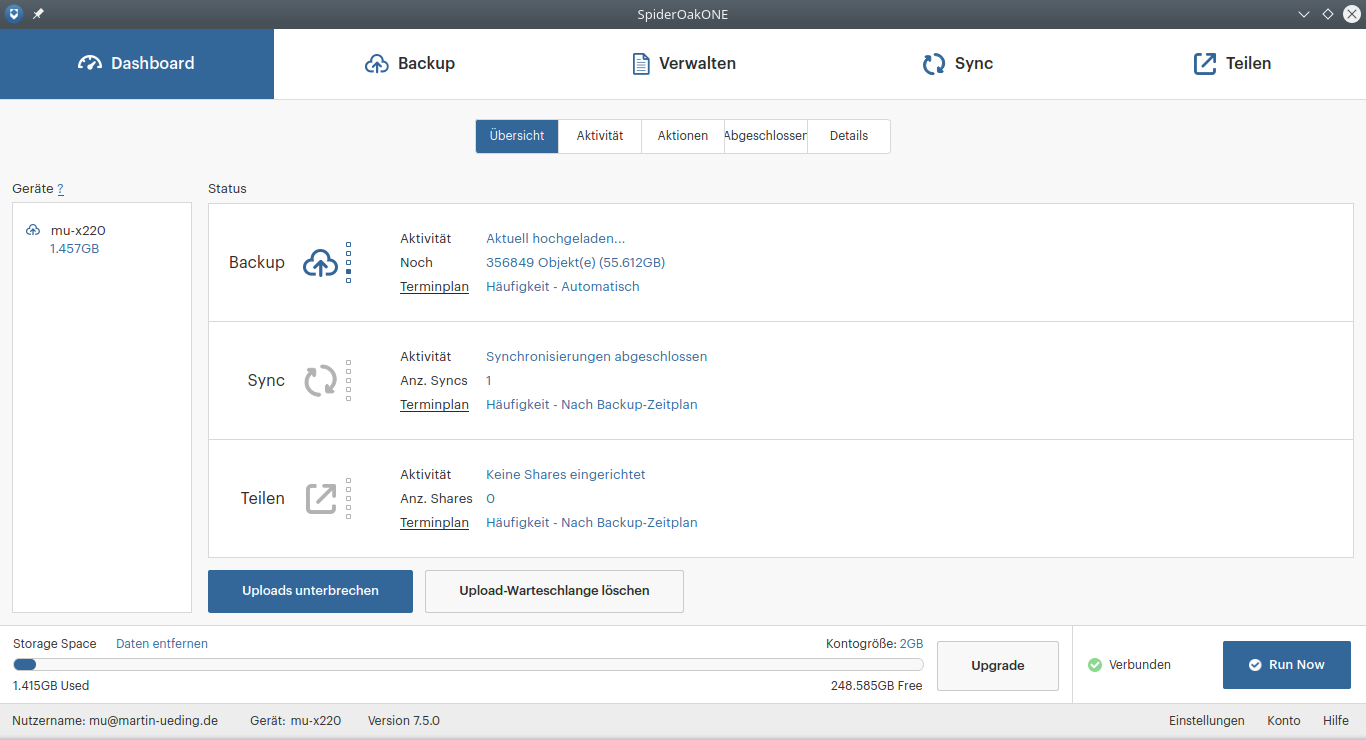

The SpiderOak service was recommended by Edward Snowden, so I tried that. It works with Linux and has encryption already on my laptop. I just needed around 150 GB of space, they used to charge 10 USD/month for that tier. Their client looks nice to use and I quickly had set it up with the free trial. It took a while before it actually started to upload things. And it seemed to use quite a bit of resources, I guess for encryption and file hashing.

My friend Simon, who had the same problem, discovered that one could also use duplicity with the Backblaze B2 storage backend. Their rates are really affordable and so I had a look at that. One can store unlimited data there and is billed proportional to the amount. I ended up paying a few EUR/month for the service, so it was cheaper than SpiderOak.

I want to show how I did it with duplicity and why I am now back with SpiderOak.

Duplicity and Backblaze B2

For my backup I really want encryption on my device. The regular service that Backblaze offers is not encrypted on the devices, so I really would not use that. But when using duplicity I can either use symmetric or asymmetric encryption right on my device. I have opted for symmetric encryption (AES) such that I only need to remember the password and not also have a GPG key to keep track of. In the end GPG also encrypts the bulk of the data with AES such that a strong password is just as good.

Basically the following invocation just does the trick:

PASSPHRASE=$passphrase \ nice duplicity \ --full-if-older-than 90D \ --exclude-filelist ~/.config/exclude-b2.txt \ --progress \ --gpg-options '--cipher-algo AES256' \ --allow-source-mismatch \ ~/ \ b2://$b2_key_id:$b2_key@$b2_bucket

The variables passphrase, b2_key_id, $b2_key and $b2_bucket need to be

set to according values.

Every time the script is called it would scan for incremental changes and upload them. After a full period (here 90 days) it would do a full backup again.

It turns out that it hogs quite a few resources to gather the incremental changes. So it needs to take the latest manifest files (still cached on the laptop) and compare them, looking at modification times. This would take like 15 minutes on each run with the files that I have selected for it. And it would drain my already weak battery, so I have restricted it to only doing backups when the charger is plugged in by adding this before the actual backup call:

if cat /sys/class/power_supply/BAT0/status | grep Discharging > /dev/null; then exit 0 fi

The script itself should only be called once, though duplicity will figure that

out on itself. I have used flock --nonblock in my cron job to make sure that

just one instance runs at any given time.

Over time more and more full backups will build up. To clean them, you can use an utility command to clean everything except the last recent ones:

PASSPHRASE=$passphrase \ duplicity remove-all-but-n-full 3 \ --force \ b2://$b2_key_id:$b2_key@$b2_bucket

The interesting part is the restore. Without a restore, a backup is worthless. And I haven't really had the need to restore yet. For testing I just deleted a file which I just had backed up with this script:

PASSPHRASE=$passphrase \ duplicity restore \ b2://$b2_key_id:$b2_key@$b2_bucket \ "$@" \

That had worked just fine, so I thought that I had a solid cloud backup at

hand. Well, that was until a few days ago when I wanted to restore a file which

I frequently change, my e-mail mbox file. The last full backup was 2020-03-08

and I do hourly incremental backups whenever the laptop is turned on and

charging. Turns out there are a bunch of incremental changesets to that file.

When I ran the restore, it picked up endless change sets, like this:

Verarbeitung des lokalen Manifest b'/home/mu/.cache/duplicity/17532b308f659397a23dae3345234484/duplicity-inc.20200414T190005Z.to.20200414T200005Z.manifest' (5321) 1 Volumen in Manifest gefunden Get: duplicity-inc.20200414T190005Z.to.20200414T200005Z.vol1.difftar.gpg -> temp/duplicity-a7n2sb9u-tempdir/mktemp-619jt1ub-252 Verarbeitung des lokalen Manifest b'/home/mu/.cache/duplicity/17532b308f659397a23dae3345234484/duplicity-inc.20200414T200005Z.to.20200414T210005Z.manifest' (7297) 1 Volumen in Manifest gefunden Get: duplicity-inc.20200414T200005Z.to.20200414T210005Z.vol1.difftar.gpg -> temp/duplicity-a7n2sb9u-tempdir/mktemp-z7996evy-253 Verarbeitung des lokalen Manifest b'/home/mu/.cache/duplicity/17532b308f659397a23dae3345234484/duplicity-inc.20200414T210005Z.to.20200414T220005Z.manifest' (5983) 1 Volumen in Manifest gefunden Get: duplicity-inc.20200414T210005Z.to.20200414T220005Z.vol1.difftar.gpg -> temp/duplicity-a7n2sb9u-tempdir/mktemp-alqkws80-254

But then all the sudden it just crashed:

Traceback (innermost last): File "/usr/bin/duplicity", line 100, in <module> with_tempdir(main) File "/usr/bin/duplicity", line 86, in with_tempdir fn() File "/usr/lib64/python3.8/site-packages/duplicity/dup_main.py", line 1538, in main do_backup(action) File "/usr/lib64/python3.8/site-packages/duplicity/dup_main.py", line 1618, in do_backup restore(col_stats) File "/usr/lib64/python3.8/site-packages/duplicity/dup_main.py", line 723, in restore if not patchdir.Write_ROPaths(globals.local_path, File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 578, in Write_ROPaths for ropath in rop_iter: File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 541, in integrate_patch_iters for patch_seq in collated: File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 407, in yield_tuples setrorps(overflow, elems) File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 396, in setrorps elems[i] = next(iter_list[i]) File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 126, in difftar2path_iter tarinfo_list = [next(tar_iter)] File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 355, in __next__ self.set_tarfile() File "/usr/lib64/python3.8/site-packages/duplicity/patchdir.py", line 348, in set_tarfile self.current_fp = next(self.fileobj_iter) File "/usr/lib64/python3.8/site-packages/duplicity/dup_main.py", line 759, in get_fileobj_iter yield restore_get_enc_fileobj(backup_set.backend, File "/usr/lib64/python3.8/site-packages/duplicity/dup_main.py", line 804, in restore_get_enc_fileobj fileobj = tdp.filtered_open_with_delete(u"rb") File "/usr/lib64/python3.8/site-packages/duplicity/dup_temp.py", line 125, in filtered_open_with_delete fh = FileobjHooked(path.DupPath.filtered_open(self, mode)) File "/usr/lib64/python3.8/site-packages/duplicity/path.py", line 802, in filtered_open return gpg.GPGFile(False, self, gpg_profile) File "/usr/lib64/python3.8/site-packages/duplicity/gpg.py", line 225, in __init__ p1 = gnupg.run([u'--decrypt'], create_fhs=gnupg_fhs, File "/usr/lib64/python3.8/site-packages/duplicity/gpginterface.py", line 374, in run process = self._attach_fork_exec(gnupg_commands, args, File "/usr/lib64/python3.8/site-packages/duplicity/gpginterface.py", line 403, in _attach_fork_exec pipe = os.pipe() OSError: [Errno 24] Too many open files

I have already filed this bug with the project. My take from this is that one just must not do that many incremental backups. The last full backup was 2020-03-08, and it crashed on an incremental backup from 2020-04-14. So perhaps doing a month without a full backup is fine on my hourly schedule. Perhaps it will crash as well, it is not really clear to me where the limit seems to be.

Additionally it needs a lot of temp space to unpack and process all these changesets. The way that duplicity works is by bundling the changes into tar archives and uploading them. It assumes that storage is linear and cannot be changed, only added to. This makes it great for tape backup and just dumping more and more files into a vault. But retrieving a single file is not really great as it needs to rebuild the file backwards from all the incremental updates.

SpiderOak One

Although with construct only uses free software, has it fully encrypted on my laptop and only costs a little, I'm not happy with it. A backup which I cannot properly restore a file from is not worth anything at all. So I took a look at the SpiderOak One backup service again. To my liking they have the 150 GB tier now at 6 USD/month, which is almost half what it used to be. I only have around 50 GB of frequently changed data, so that is a nice fit.

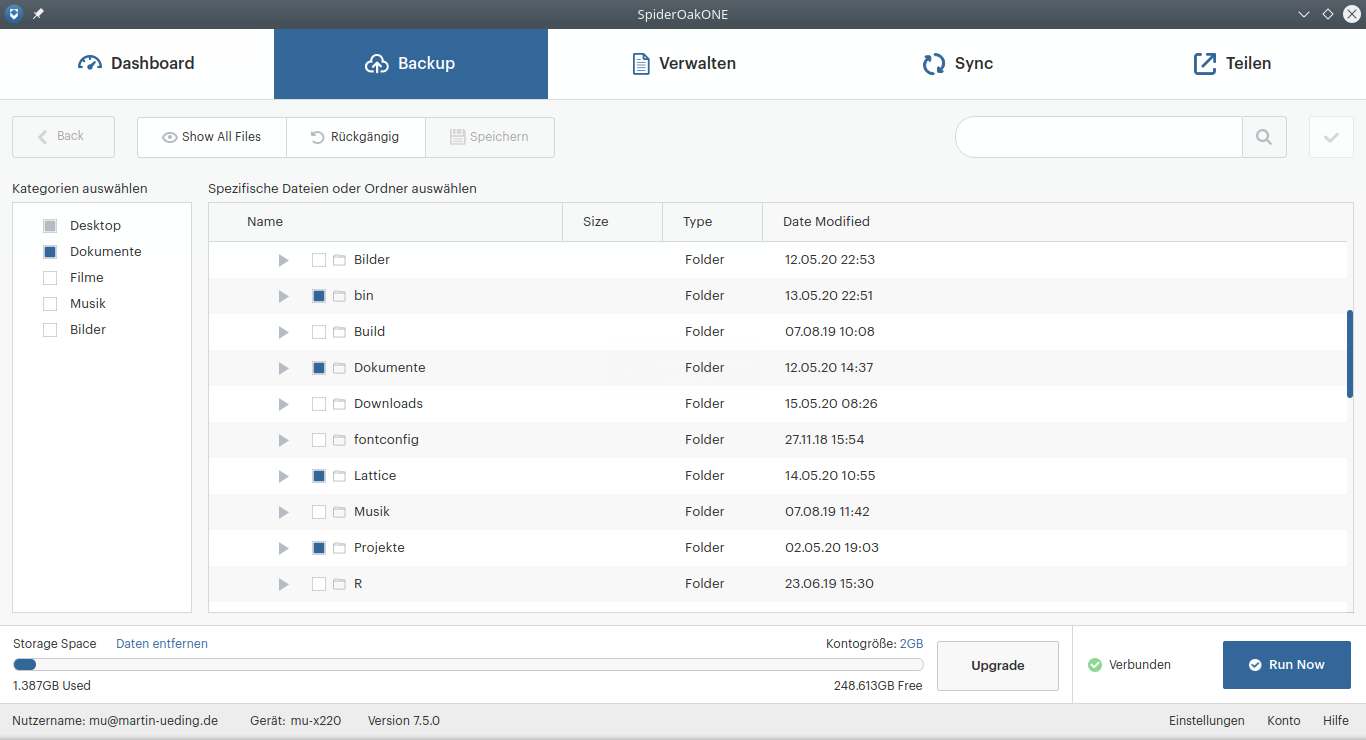

One just selects the files to upload in the GUI:

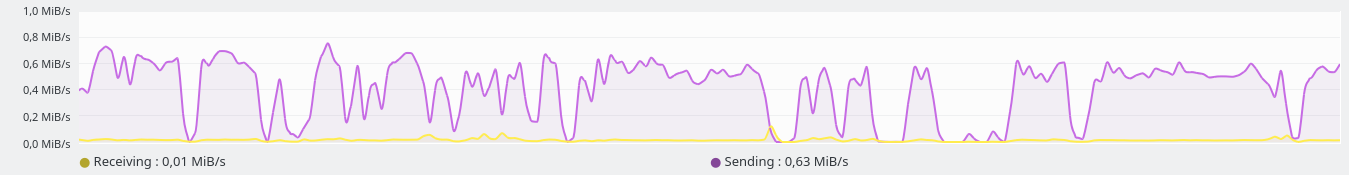

The initial upload always takes some time, and the bandwidth did not get saturated the whole time:

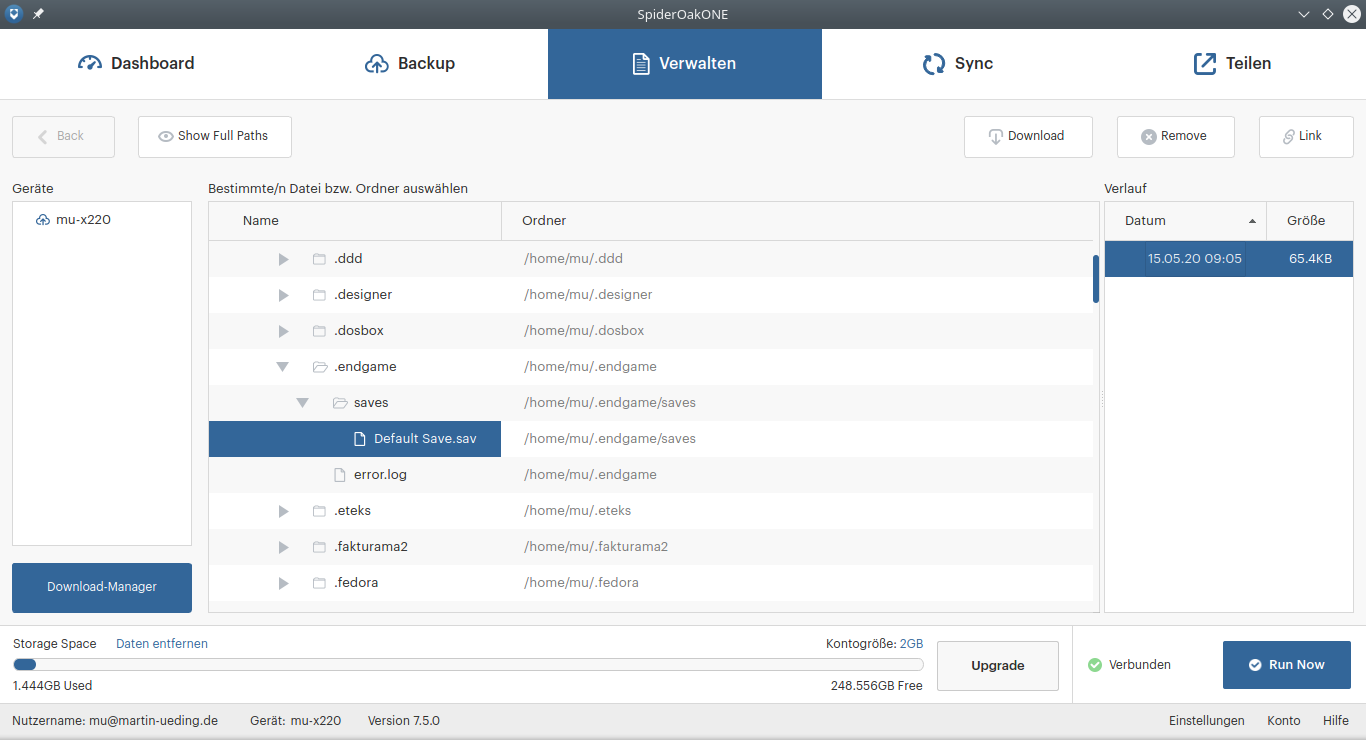

With duplicity it seems that the upload is saturated better, but on the other hand takes also quite a lot of time to stage the backup on the local machine. I assume that SpiderOak does the management of the incremental backups on the server side. They likely store each updated file individually and have deduplication and versioning on a file level. This makes it much easier to just restore a single file. I just go do it in the file explorer and can see all the dates that this file has been changed:

Or one can just download a whole directory from that panel.

I'll be taking a closer look at it during the trial, but I am hopeful that it works better for me than the construct of duplicity with Backblaze B2. Also I need to see how the CPU usage is while the program is running. With duplicity I had around 15 minutes saturation on one CPU core every time I ran the backup. With SpiderOak One I also have saturation during the initial upload, I'll have to get a feel for the incremental parts.